Uncategorized

-

How to implement a research paper from start to finish

I’ve just finished making a video course that walks through how to implement a research paper: Actually, it’s more like implementing several research papers: Plus a smattering of topics: Why? Implementing a paper is a weird skill. I haven’t found any tutorial for how to do it, and assume that you either learn it by…

-

No, you don’t need a moat

A leaked Google memo is going around and it’s worth a read. The author (AI researcher inside of Google) thinks that Google has no strategic edge in the future of AI and needs to change direction. The analysis is good, but the conclusion is wrong. In this post:

-

From the archive: GLUE, QA Systems, and more

Crossposting some old content: GLUE Explained: Understanding BERT Through Benchmarks How To Build Your Own Question Answering System 2020 NLP and NeurIPS Highlights Domain-Specific BERT Models

-

XLNet Fine-Tuning Tutorial with PyTorch

Another one! This is nearly the same as the BERT fine-tuning post but uses the updated huggingface library. (There are also a few differences in preprocessing XLNet requires.)

-

BERT Fine-Tuning Tutorial with PyTorch

Here’s another post I co-authored with Chris McCormick on how to quickly and easily create a SOTA text classifier by fine-tuning BERT in PyTorch. This was created when BERT was pretty new and exciting, but the tooling for it was quite bad. Huggingface hosted the model, but documentation was very poor. As a result of…

-

BERT Word Embeddings Tutorial

Check out the post I co-authored with Chris McCormick on BERT Word Embeddings here. We take an in-depth look at the word embeddings produced by BERT, show you how to create your own in a Google Colab notebook, and tips on how to implement and use these embeddings in your production pipeline. This was created…

-

Broyden’s Method in Python

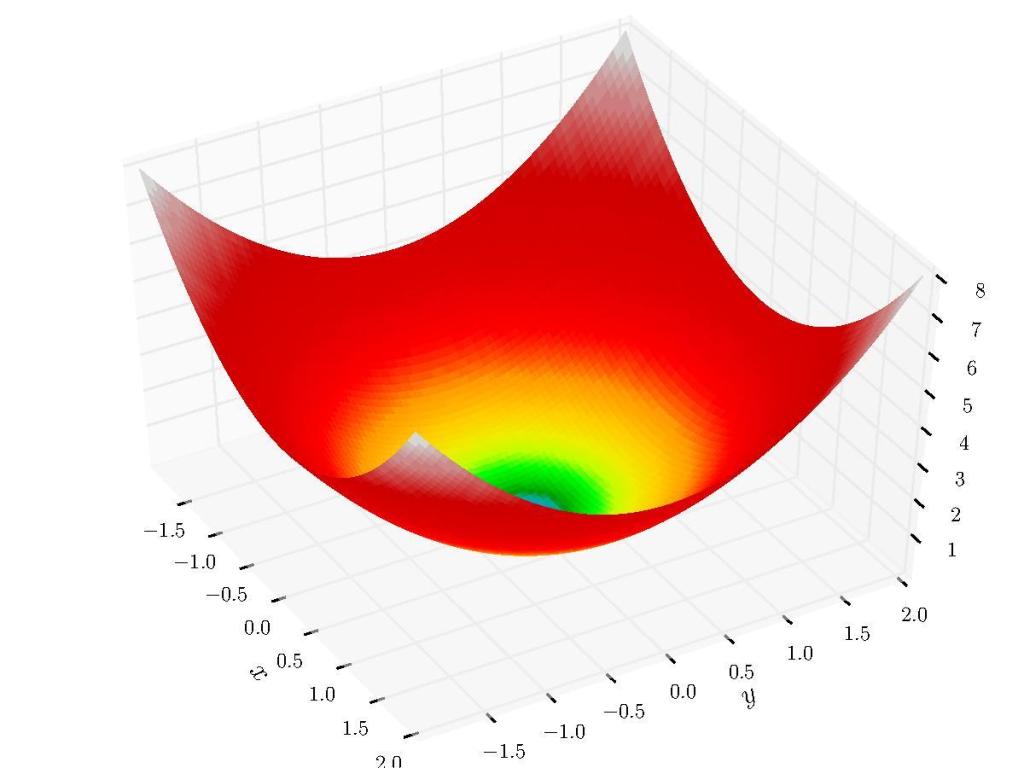

In a previous post we looked at root-finding methods for single variable equations. In this post we’ll look at the expansion of Quasi-Newton methods to the multivariable case and look at one of the more widely-used algorithms today: Broyden’s Method.

-

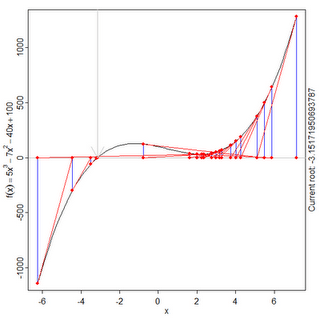

Root-Finding Algorithms Tutorial in Python: Line Search, Bisection, Secant, Newton-Raphson, Inverse Quadratic Interpolation, Brent’s Method

Motivation How do you find the roots of a continuous polynomial function? Well, if we want to find the roots of something like:

-

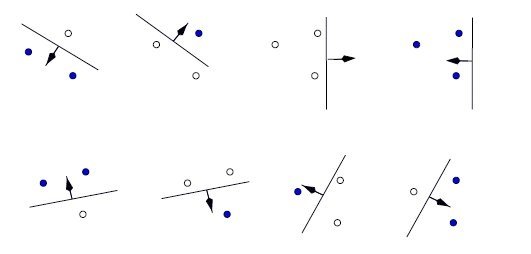

Statistical Learning Theory: VC Dimension, Structural Risk Minimization

Sometimes our models overfit, sometimes they overfit. A model’s capacity is, informally, its ability to fit a wide variety of functions. As a simple example, a linear regression model with a single parameter has a much lower capacity than a linear regression model with multiple polynomial parameters. Different datasets demand models of different capacity, and…

-

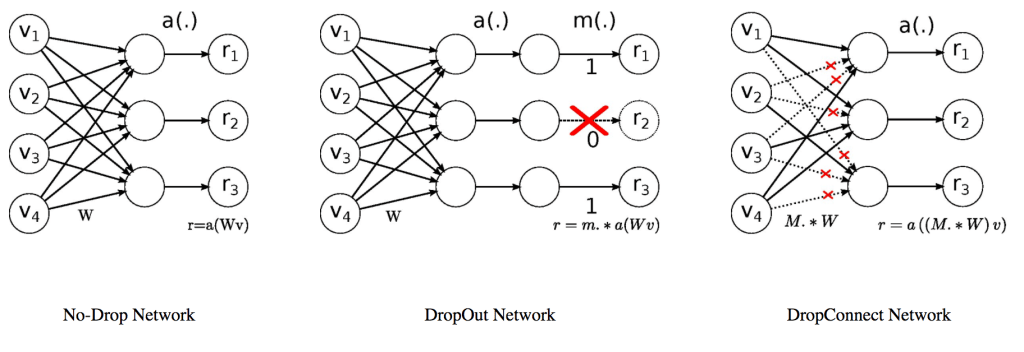

DropConnect Implementation in Python and TensorFlow

I wouldn’t expect DropConnect to appear in TensorFlow, Keras, or Theano since, as far as I know, it’s used pretty rarely and doesn’t seem as well-studied or demonstrably more useful than its cousin, Dropout. However, there don’t seem to be any implementations out there, so I’ll provide a few ways of doing so.