NLP

-

XLNet Fine-Tuning Tutorial with PyTorch

Another one! This is nearly the same as the BERT fine-tuning post but uses the updated huggingface library. (There are also a few differences in preprocessing XLNet requires.)

-

BERT Fine-Tuning Tutorial with PyTorch

Here’s another post I co-authored with Chris McCormick on how to quickly and easily create a SOTA text classifier by fine-tuning BERT in PyTorch. This was created when BERT was pretty new and exciting, but the tooling for it was quite bad. Huggingface hosted the model, but documentation was very poor. As a result of…

-

BERT Word Embeddings Tutorial

Check out the post I co-authored with Chris McCormick on BERT Word Embeddings here. We take an in-depth look at the word embeddings produced by BERT, show you how to create your own in a Google Colab notebook, and tips on how to implement and use these embeddings in your production pipeline. This was created…

-

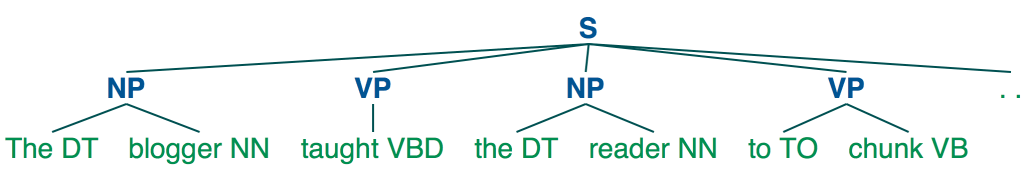

Shallow Parsing for Entity Recognition with NLTK and Machine Learning

Getting Useful Information Out of Unstructured Text Let’s say that you’re interested in performing a basic analysis of the US M&A market over the last five years. You don’t have access to a database of transactions and don’t have access to tombstones (public advertisements announcing the minimal details of a closed deal, e.g. ABC acquires XYZ for…

-

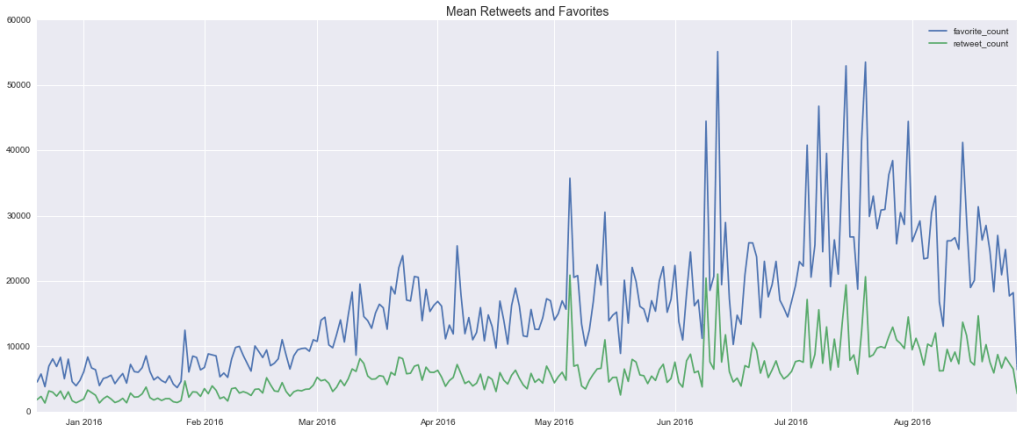

Trump Tweet Analysis

This project stems from two overarching questions: Which emotions do politicians most frequently appeal to? I recently saw a BuzzFeed presentation on, among other things, the virality of BuzzFeed content. A big part of their business relies on understanding what kind of content goes viral and why, so their data science team understandably spends a lot…

-

Article Classification and News Headlines Over Time

How does front page news track a single topic over a period of time? What’s the media’s attention span for a given story? In general, many find it surprising how quickly major media outlets shift their attention from one story to another. This is partly a reflection of our own attention spans and appetites, and…